Headless CMSs such as Conentful and microCMS provide a very high degree of freedom of expression on the front side by retrieving from an API.

These services have API request rate limits, which need to be implemented taking into account the possibility of API access being disabled if a certain number of requests or communication volume is exceeded.

The challenge here is that API requests are deprecated for every rendering in the browser.

This is because it is impossible to manage the timing of requests if they are caused by user requests.

For blogs, for example, you can use SSG and make an API request every time you build, and not make an API request from a user request.

In this article, we consider caching strategies that take SSRs and CSRs into account with rate-limited API requests.

API request rate

The first step is to investigate the service limits.

They vary depending on the tariff plan, but in this case we referred to the Free Plan.

Contentful API Limits

Contentful is divided into CMA : Content Delivery API, which is a reference API for published Entries and Assets, CPA : Content Preview API, which can acquire unpublished Drafts, and CMA : Content Management API, which can acquire schedule publication information. Content Management API, which enables scheduled publication information to be acquired.

It is necessary to check each of these according to the intended use.

Usage Limit

- API Total calls (CMA、CPA、CDA)

- 1 million times/month

- usage bandwidth

- 0.85TB/montch

Technical Limits

- CDA calls

- 55 requests per second

- CMA calls

- 7 requests per second

- CPA calls

- 14 requests per second

micro CMS API Limits

- Number of GET API calls

- 60 requests per second

- Number of WRITE API calls

- 5 requests per second

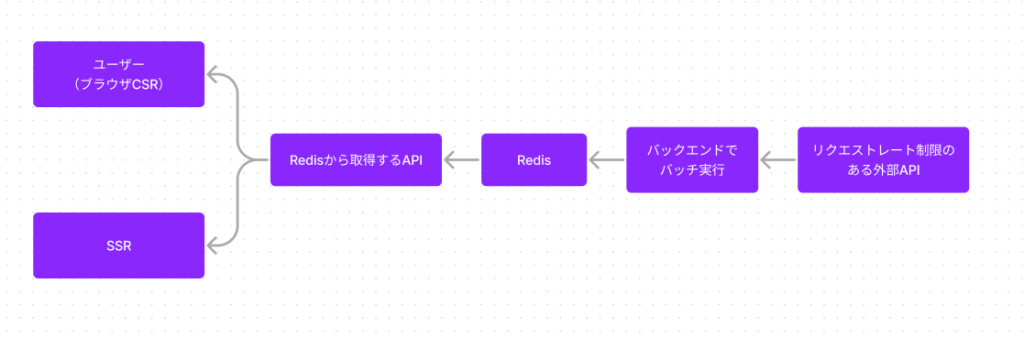

Cash strategy

The number of requests per second is resolved using a cache, subject to various restrictions.

This is something that becomes more likely to exceed the request rate as the service grows larger and the load increases.

Therefore, consider caching while meeting the following requirements.

- SSGs are not used

- No API requests for services from user requests

- The number of API requests can be monitored by the operator

- Can be updated at the timing intended by the operator

Make API requests for the service only from the back-end side.

The data retrieved from the API is stored in the Redis cache in a batch process, taking into account the request per second limit and the update frequency.

Requests from the front end only access Redis and do not make API requests for the service.

This allows the number of API requests to be managed by the back-end alone, without the API being hit by users.

If the update frequency is also batch executed with 5-minute updates, there should be no major operational problems.

Summary

Whenever you use an external API, look at the API Limit in your design.

During development and testing, it is difficult to notice because you are only hitting the API, and when you do, it can become a big problem.